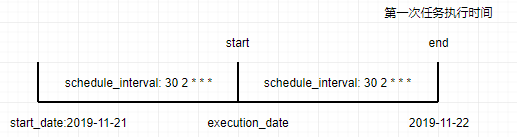

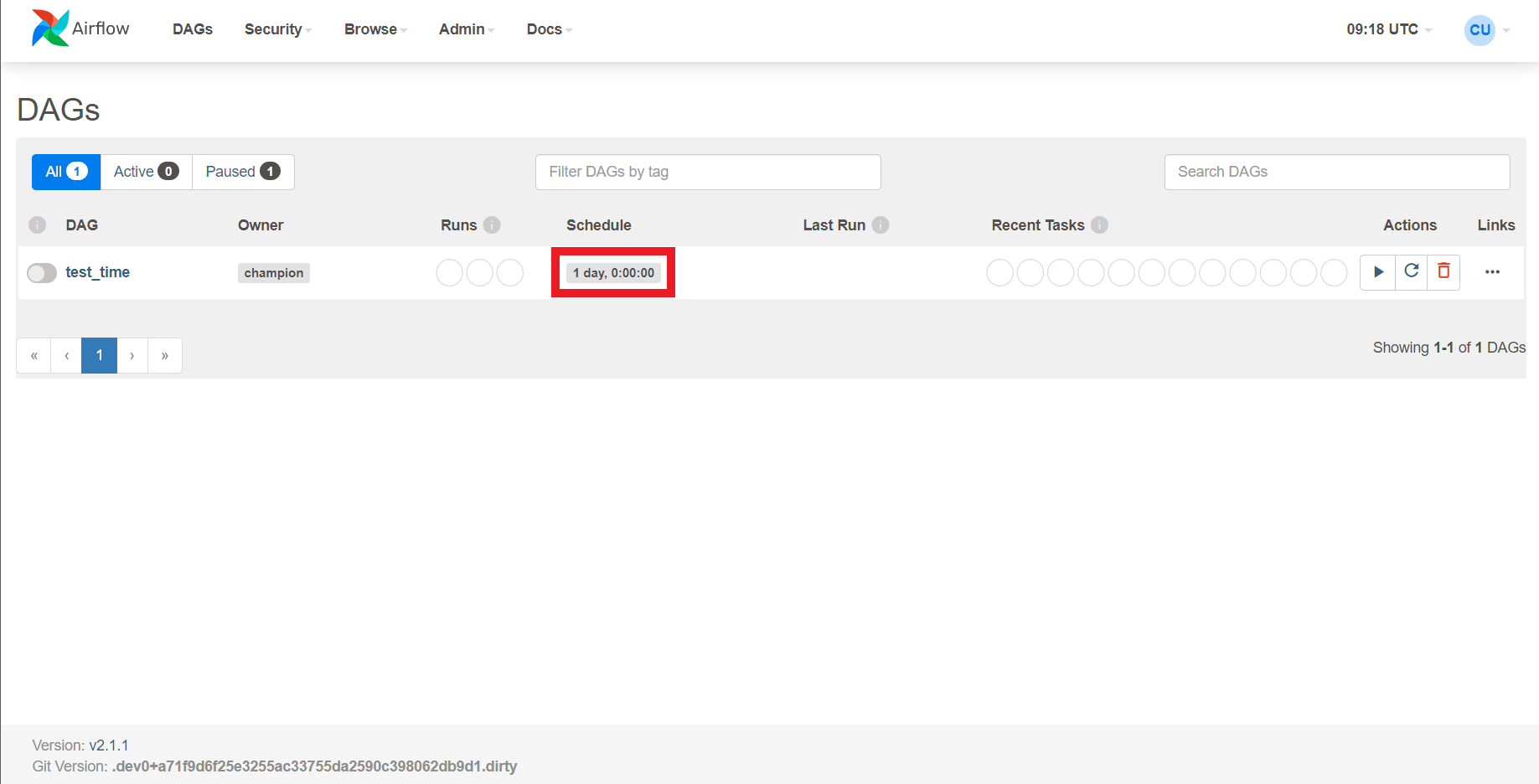

I'm not convinced that this is just a documentation issue the fact that logical_date and all derived fields can have contextually different meanings seems fundamentally broken to me. INFO - dag_run.logical_date 22:23:58+00:00ĬentOS 7.4 Versions of Apache Airflow Providers However, when the DAG is being automatically scheduled, with certain schedule interval put in place, the logical date is going to indicate the time at which it marks the start of the data interval, where the DAG run’s start date would then be the logical date + scheduled interval. However, it's worth noting that DAGs: Running DAGs does seem to explain this edge case:įor example, if a DAG run is manually triggered by the user, its logical date would be the date and time of which the DAG run was triggered, and the value should be equal to DAG run’s start date. Note that ds ( the YYYY-MM-DD form of data_interval_start) refers to date string, not date start as may be confusing to some. The “logical date” (also called execution_date in Airflow versions prior to 2.2) of a DAG run, for example, denotes the start of the data interval, not when the DAG is actually executed. Quoting the docs in a few different places (emphasis mine): I expected ds to always equal data_interval_start. This behavior contradicts the documentation in a few places, and can cause tasks that depend on those template params to behave unintuitively.

This is inconsistent with automated runs where those fields are set to data_interval_start.

When triggering a DAG manually (via the web or via airflow dags trigger), some template params like ds, ts, and others derived from dag_run.logical_date will be set to the specified execution timestamp.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed